AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Flume apache2/28/2024

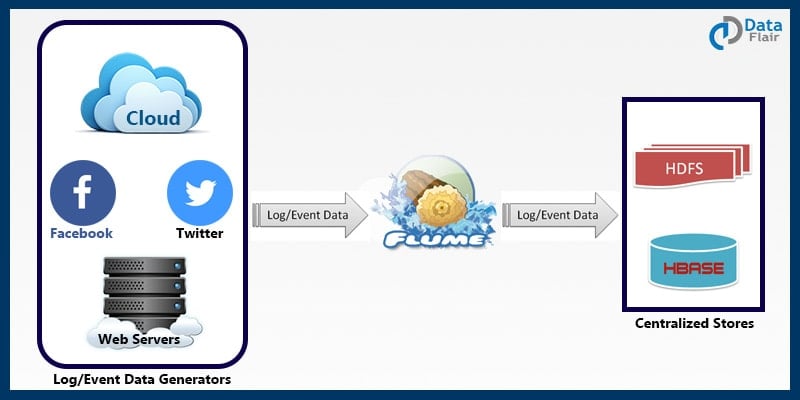

There are different formats in which the data can be transferred. The source component receives the data from an external data source or a flume sink. Given below is representation of a simple Flume agent listening to a webserver and writing the data to HDFS. A flume agent is basically a JVM process which consists of these three components through which data flow occurs. We also have the feature of storing the data in various location based upon various parameters.įlume basically consists of three main components, Source, Channel and Sink. It is useful when a data file has to be written in multiple location or data from multiple sources have to be written in a single destination. It take the data from the source and writes it into the destination as configured.It can be used to transfer files also. This is where Apache Flume comes into play. The other alternative is transfer the logs as soon as they are created and storing them in the required file system. So such classic methods cannot be used in case of huge data. Transferring of such huge files over the network is not reliable and can lead to total loss of data or data breach. The files have to be transferred to HDFS for analysis using Hadoop. The logs get stored in the server as configured. Let’s consider a scenario where logs of various web servers has to be analyzed. Flume can be considered as a real time data transfer distributed system to prevent any data loss. As soon as the data is written by the specific source, Flume system consumes the event and transfers it to the destination as configured. Flume system is configured to hear to a specific data source. For example, each log event saved in a web server can be considered as an event. The data source is considered to be a source of various set of events. In Flume, each unit data is considered as an event. It is an important component in the Hadoop ecosystem. It has in-built features such as reliability, failover and recovery mechanisms and is fault tolerant. In simple terms, It is used to move the data from one location to another in a reliable and efficient manner. Agents are used to run the sinks and sources in flume.Īpache Flume is a distributed system used for aggregating the files to a single location.Interceptors drop data or transfer data as it flows into the system.Channels connect between sources and sink by queuing event data for transactions.

The basically write event data to a target and remove the event from the queue. Sinks receive data and store it in HDFS repository or transmit the data to another source.Sources listen for events and write events to a channel. Sources- They accept data from a server or an application.Events- The data units that are transferred over a channel from source to sink.Flume takes data from several sources like Avro, Syslog’s, and files and delivers to various destinations like Hadoop HDFS or HBase.Īpache Flume is composed of 6 important components. Apache Flume is an agent for data collection.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed